Runtime Procedural Generation

A tower defense game

Overview

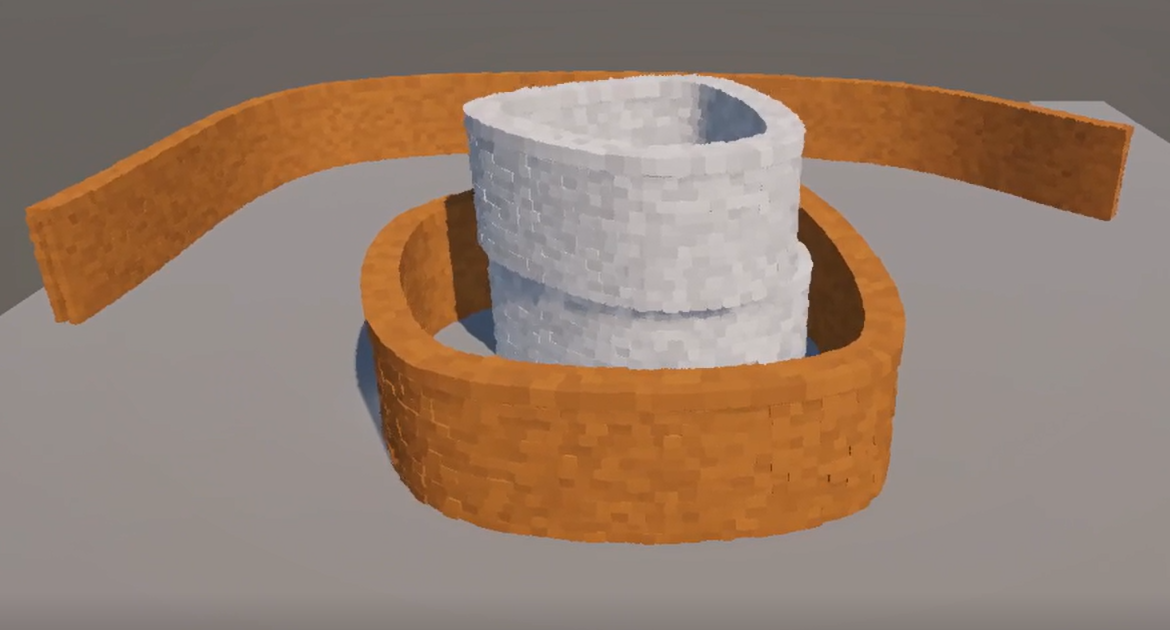

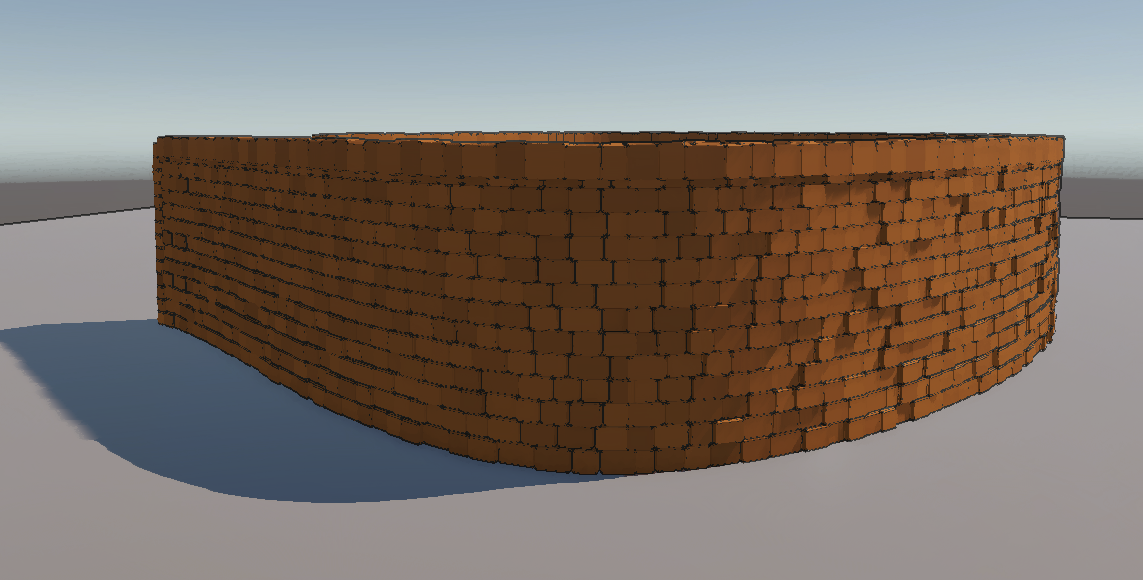

Starting off as a tech demo, it has grown into an ongoing part time project with my friends. Currently, I'm leading the development of the core feature: procedurally generated buildings, interactable in realtime.

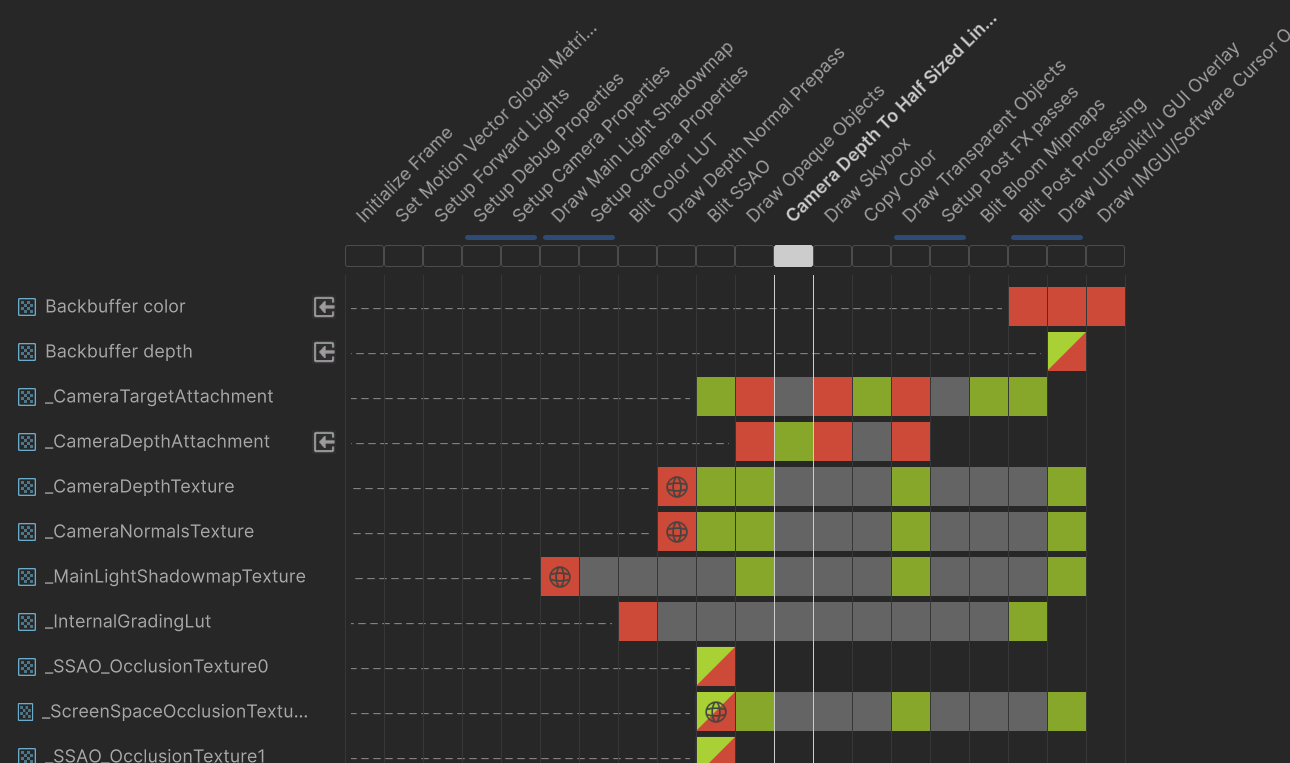

The realtime PCG pipeline:

Building data managed in CPU

(Bezier splines, Oriented bounding boxes, and analytical shapes)

↓ Pass to GPU buffers

Compute shaders generate transforms for each brick mesh

↓ Append transforms to a buffer

Cull unseeable brick meshes (visible and shadows)

↓ Append survived instance to another buffer for render

Draw all remaining meshes using RenderMeshIndirect API

Duration

07/2025 - now

Tools

Unity, URP, C#, HLSL, Unreal

In this page:

#Trial and error

#Realtime procedural generation

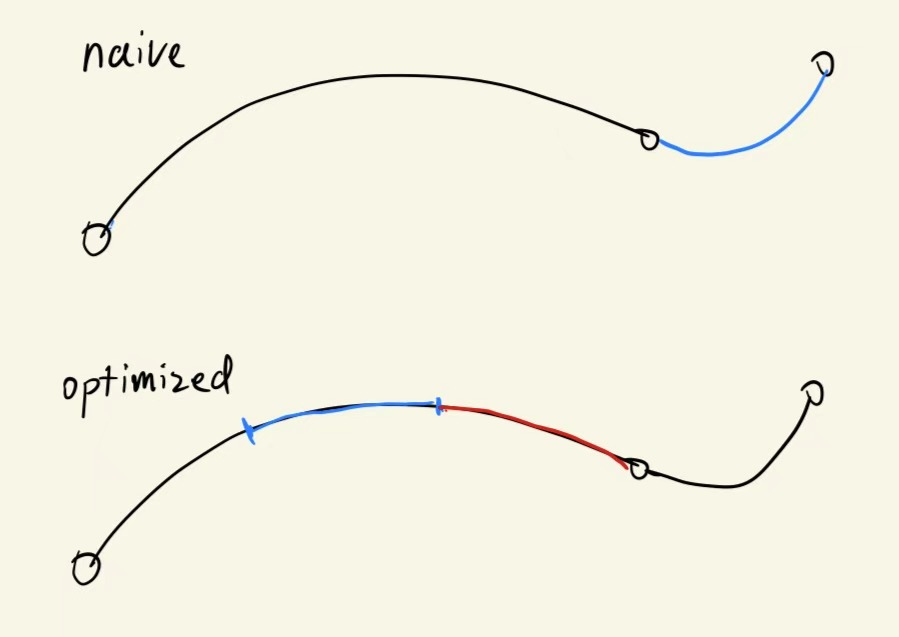

#Rendering optimization

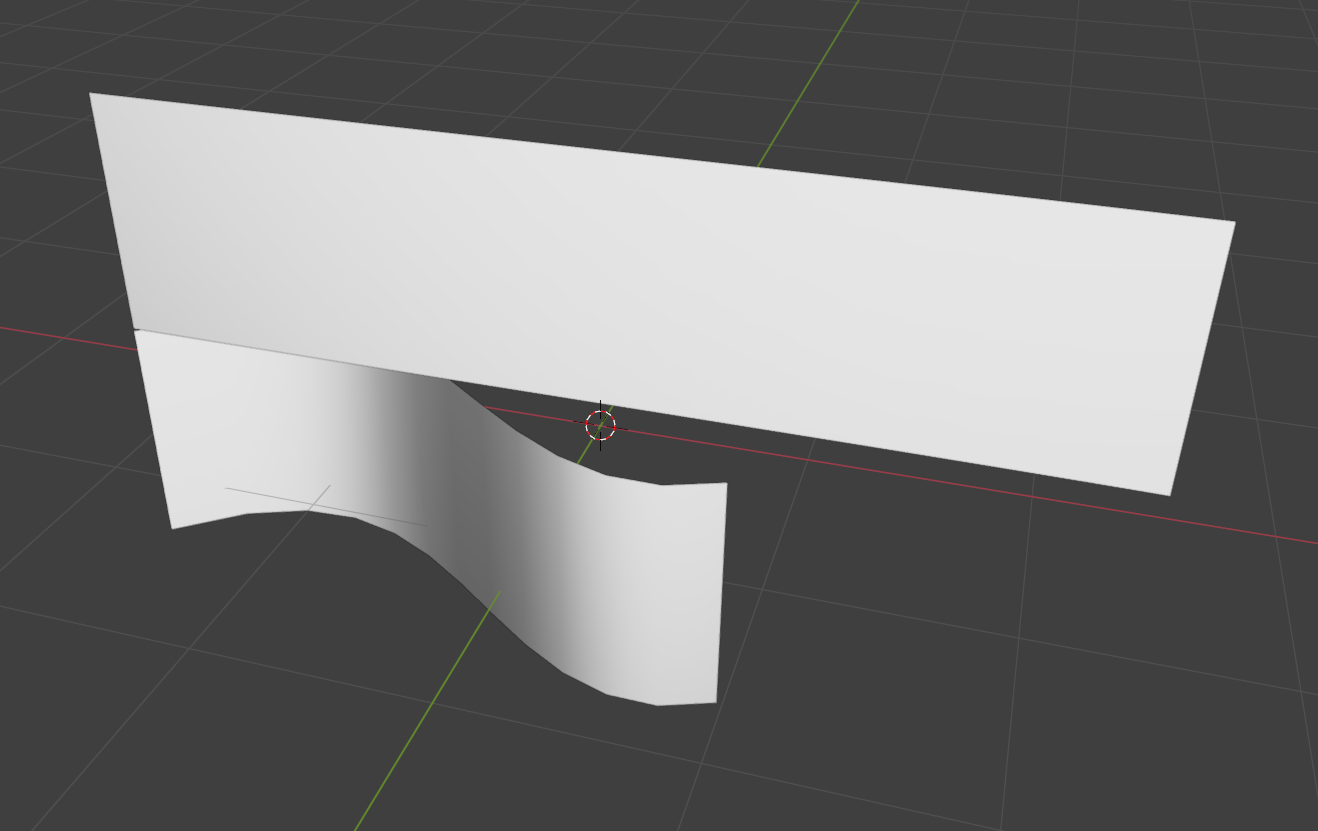

#Aesthetic

#Editor Tool

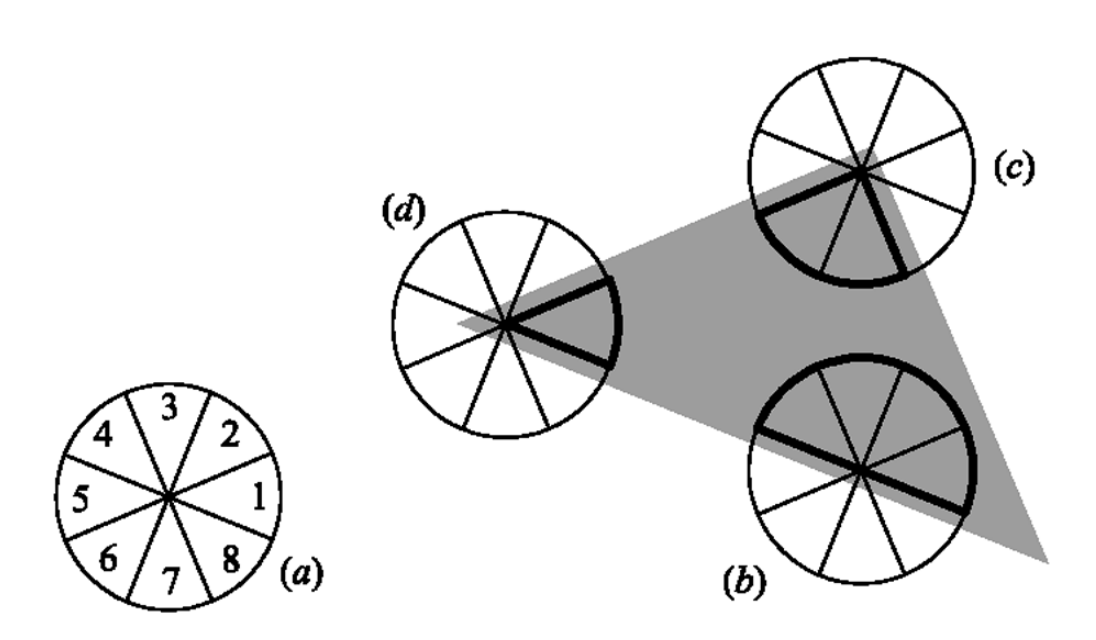

^ the 8 slices surrounding the target fragment, image from the original paper by Papari et al.

^ the 8 slices surrounding the target fragment, image from the original paper by Papari et al.